Cayenne Guide

1. Object Relational Mapping with Cayenne

1.1. Setup

System Requirements

-

Java: Cayenne runtime framework and CayenneModeler GUI tool are written in 100% Java, and run on any Java-compatible platform. Minimal required JDK version depends on the version of Cayenne you are using, as shown in the following table:

| Cayenne Version | Java Version | Status |

|---|---|---|

4.0 |

Java 1.7 or newer |

Stable |

3.1 |

Java 1.5 or newer |

Stable |

3.0 |

Java 1.5 |

Aging |

1.2 / 2.0 |

Java 1.4 |

Legacy |

1.1 |

Java 1.3 |

Legacy |

-

JDBC Driver: An appropriate DB-specific JDBC driver is needed to access the database. It can be included in the application or used in web container DataSource configuration.

-

Third-party Libraries: Cayenne runtime framework has a minimal set of required and a few more optional dependencies on third-party open source packages. See Including Cayenne in a Project chapter for details.

Running CayenneModeler

CayenneModeler GUI tool is intended to work with object relational mapping projects. While you can edit your XML by hand, it is rarely needed, as the Modeler is a pretty advanced tool included in Cayenne distribution. To obtain CayenneModeler, download Cayenne distribution archive from https://cayenne.apache.org/download.html matching the OS you are using. Of course Java needs to be installed on the machine where you are going to run the Modeler.

-

OS X distribution contains CayenneModeler.app at the root of the distribution disk image.

-

Windows distribution contains CayenneModeler.exe file in the bin directory.

-

Cross-platform distribution (targeting Linux, but as the name implies, compatible with any OS) contains a runnable CayenneModeler.jar in the bin directory. It can be executed either by double-clicking, or if the environment is not configured to execute jars, by running from command-line:

$ java -jar CayenneModeler.jarThe Modeler can also be started from Maven. While it may look like an exotic way to start a GUI application, it has its benefits - no need to download Cayenne distribution, the version of the Modeler always matches the version of the framework, the plugin can find mapping files in the project automatically. So it is an attractive option to some developers. Maven option requires a declaration in the POM:

<build>

<plugins>

<plugin>

<groupId>org.apache.cayenne.plugins</groupId>

<artifactId>cayenne-modeler-maven-plugin</artifactId>

<version>4.0.3</version>

</plugin>

</plugins>

</build>And then can be run as

$ mvn cayenne-modeler:run

| Name | Type | Description |

|---|---|---|

modelFile |

File |

Name of the model file to open. Here is some simple example: |

1.2. Cayenne Mapping Structure

Cayenne Project

A Cayenne project is an XML representation of a model connecting database schema with Java classes. A project is normally created and manipulated via CayenneModeler GUI and then used to initialize Cayenne runtime. A project is made of one or more files. There’s always a root project descriptor file in any valid project. It is normally called cayenne-xyz.xml, where "xyz" is the name of the project.

Project descriptor can reference DataMap files, one per DataMap. DataMap files are normally called xyz.map.xml, where "xyz" is the name of the DataMap. For legacy reasons this naming convention is different from the convention for the root project descriptor above, and we may align it in the future versions. Here is how a typical project might look on the file system:

$ ls -l total 24 -rw-r--r-- 1 cayenne staff 491 Jan 28 18:25 cayenne-project.xml -rw-r--r-- 1 cayenne staff 313 Jan 28 18:25 datamap.map.xml

DataMap are referenced by name in the root descriptor:

<map name="datamap"/>Map files are resolved by Cayenne by appending ".map.xml" extension to the map name, and resolving the resulting string relative to the root descriptor URI. The following sections discuss varios ORM model objects, without regards to their XML representation. XML format details are really unimportant to the Cayenne users.

DataMap

DataMap is a container of persistent entities and other object-relational metadata. DataMap provides developers with a scope to organize their entities, but it does not provide a namespace for entities. In fact all DataMaps present in runtime are combined in a single namespace. Each DataMap must be associated with a DataNode. This is how Cayenne knows which database to use when running a query.

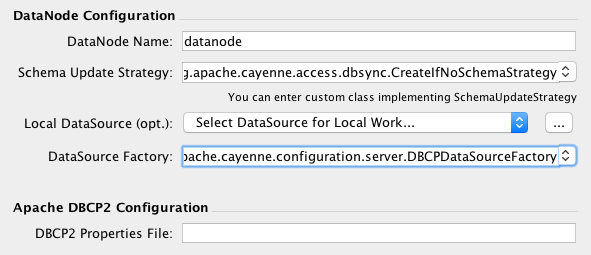

DataNode

DataNode is model of a database. It is actually pretty simple. It has an arbitrary user-provided name and information needed to create or locate a JDBC DataSource. Most projects only have one DataNode, though there may be any number of nodes if needed.

DbEntity

DbEntity is a model of a single DB table or view. DbEntity is made of DbAttributes that correspond to columns, and DbRelationships that map PK/FK pairs. DbRelationships are not strictly tied to FK constraints in DB, and should be mapped for all logical "relationships" between the tables.

ObjEntity

ObjEntity is a model of a single persistent Java class. ObjEntity is made of ObjAttributes and ObjRelationships. Both correspond to entity class properties. However ObjAttributes represent "simple" properties (normally things like String, numbers, dates, etc.), while ObjRelationships correspond to properties that have a type of another entity.

ObjEntity maps to one or more DbEntities. There’s always one "root" DbEntity for each ObjEntity. ObjAttribiute maps to a DbAttribute or an Embeddable. Most often mapped DbAttribute is from the root DbEntity. Sometimes mapping is done to a DbAttribute from another DbEntity somehow related to the root DbEntity. Such ObjAttribute is called "flattened". Similarly ObjRelationship maps either to a single DbRelationship, or to a chain of DbRelationships ("flattened" ObjRelationship).

ObjEntities may also contain mapping of their lifecycle callback methods.

1.3. CayenneModeler Application

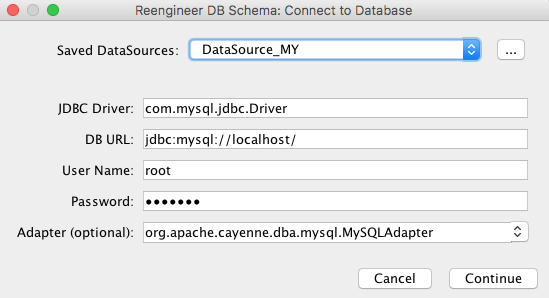

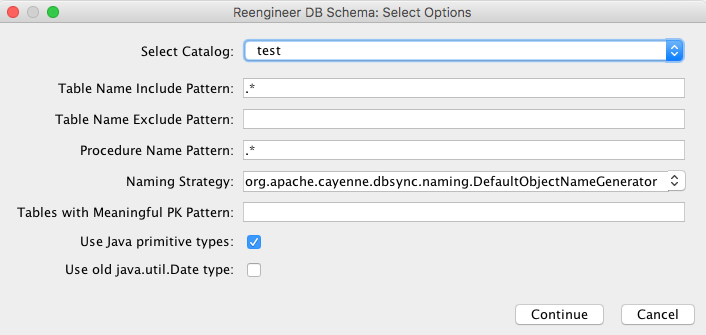

Reverse Engineering Database

See chapter Reverse Engineering in Cayenne Modeler

Generating Database Schema

With Cayenne Modeler you can create simple database schemas without any additional database tools. This is a good option for initial database setup if you completely created you model with the Modeler. You can start SQL schema generation by selecting menu Tools > Generate Database Schema

You can select what database parts should be generated and what tables you want

Generating Java Classes

Before using Cayenne in you code you need to generate java source code for persistent objects. This can be done with Modeler GUI or via cgen maven/ant plugin.

To generate classes in the modeler use Tools > Generate Classes

There is three default types of code generation

-

Standard Persistent Objects

Default type of generation suitable for almost all cases. Use this type unless you now what exactly you need to customize.

-

Client Persistent Objects

-

Advanced

In advanced mode you can control almost all aspects of code generation including custom templates for java code. See default Cayenne templates on GitHub as an example.

Modeling Generic Persistent Classes

Normally each ObjEntity is mapped to a specific Java class (such as Artist or Painting) that explicitly declare all entity properties as pairs of getters and setters. However Cayenne allows to map a completly generic class to any number of entities. The only expectation is that a generic class implements org.apache.cayenne.DataObject. So an ideal candidate for a generic class is CayenneDataObject, or some custom subclass of CayenneDataObject.

If you don’t enter anything for Java Class of an ObjEntity, Cayenne assumes generic mapping and uses the following implicit rules to determine a class of a generic object. If DataMap "Custom Superclass" is set, runtime uses this class to instantiate new objects. If not, org.apache.cayenne.CayenneDataObject is used.

Class generation procedures (either done in the Modeler or with Ant or Maven) would skip entities that are mapped to CayenneDataObject explicitly or have no class mapping.

2. Cayenne Framework

2.1. Including Cayenne in a Project

Jar Files

This is an overview of Cayenne jars that is agnostic of the build tool used. The following are the important libraries:

-

cayenne-di-4.0.3.jar- Cayenne dependency injection (DI) container library. All applications will require this file. -

cayenne-server-4.0.3.jar- contains main Cayenne runtime (adapters, DB access classes, etc.). Most applications will require this file. -

cayenne-client-4.0.3.jar- a client-side runtime for ROP applications -

Other cayenne-* jars - various Cayenne tools extensions.

Dependencies

With modern build tools like Maven and Gradle, you should not worry about tracking dependencies. If you have one of those, you can skip this section and go straight to the Maven section below. However if your environment requires manual dependency resolution (like Ant), the distribution provides all of Cayenne jars plus a minimal set of third-party dependencies to get you started in a default configuration. Check lib and lib/third-party folders for those.

Dependencies for non-standard configurations will need to be figured out by the users on their own. Check pom.xml files of the corresponding Cayenne modules in one of the searchable Maven archives out there to get an idea of those dependencies (e.g. http://search.maven.org).

Maven Projects

If you are using Maven, you won’t have to deal with figuring out the dependencies. You can simply include cayenne-server artifact in your POM:

<dependency>

<groupId>org.apache.cayenne</groupId>

<artifactId>cayenne-server</artifactId>

<version>4.0.3</version>

</dependency>Additionally Cayenne provides a Maven plugin with a set of goals to perform various project tasks, such as synching generated Java classes with the mapping, described in the following subsection. The full plugin name is org.apache.cayenne.plugins:cayenne-maven-plugin.

cgen

cgen is a cayenne-maven-plugin goal that generates and maintains source (.java) files of persistent objects based on a DataMap. By default, it is bound to the generate-sources phase. If "makePairs" is set to "true" (which is the recommended default), this task will generate a pair of classes (superclass/subclass) for each ObjEntity in the DataMap. Superclasses should not be changed manually, since they are always overwritten. Subclasses are never overwritten and may be later customized by the user. If "makePairs" is set to "false", a single class will be generated for each ObjEntity.

By creating custom templates, you can use cgen to generate other output (such as web pages, reports, specialized code templates) based on DataMap information.

| Name | Type | Description |

|---|---|---|

map |

File |

DataMap XML file which serves as a source of metadata for class generation. E.g. ${project.basedir}/src/main/resources/my.map.xml

|

| Name | Type | Description |

|---|---|---|

additionalMaps |

File |

A directory that contains additional DataMap XML files that may be needed to resolve cross-DataMap relationships for the the main DataMap, for which class generation occurs. |

client |

boolean |

Whether we are generating classes for the client tier in a Remote Object Persistence application. "False" by default. |

destDir |

File |

Root destination directory for Java classes (ignoring their package names). The default is "src/main/java". |

embeddableTemplate |

String |

Location of a custom Velocity template file for Embeddable class generation. If omitted, default template is used. |

embeddableSuperTemplate |

String |

Location of a custom Velocity template file for Embeddable superclass generation. Ignored unless "makepairs" set to "true". If omitted, default template is used. |

encoding |

String |

Generated files encoding if different from the default on current platform. Target encoding must be supported by the JVM running the build. Standard encodings supported by Java on all platforms are US-ASCII, ISO-8859-1, UTF-8, UTF-16BE, UTF-16LE, UTF-16. See javadocs for java.nio.charset.Charset for more information. |

excludeEntities |

String |

A comma-separated list of ObjEntity patterns (expressed as a perl5 regex) to exclude from template generation. By default none of the DataMap entities are excluded. |

includeEntities |

String |

A comma-separated list of ObjEntity patterns (expressed as a perl5 regex) to include from template generation. By default all DataMap entities are included. |

makePairs |

boolean |

If "true" (a recommended default), will generate subclass/superclass pairs, with all generated code placed in superclass. |

mode |

String |

Specifies class generator iteration target. There are three possible values: "entity" (default), "datamap", "all". "entity" performs one generator iteration for each included ObjEntity, applying either standard to custom entity templates. "datamap" performs a single iteration, applying DataMap templates. "All" is a combination of entity and datamap. |

overwrite |

boolean |

Only has effect when "makePairs" is set to "false". If "overwrite" is "true", will overwrite older versions of generated classes. |

superPkg |

String |

Java package name of all generated superclasses. If omitted, each superclass will be placed in the subpackage of its subclass called "auto". Doesn’t have any effect if either "makepairs" or "usePkgPath" are false (both are true by default). |

superTemplate |

String |

Location of a custom Velocity template file for ObjEntity superclass generation. Only has effect if "makepairs" set to "true". If omitted, default template is used. |

template |

String |

Location of a custom Velocity template file for ObjEntity class generation. If omitted, default template is used. |

usePkgPath |

boolean |

If set to "true" (default), a directory tree will be generated in "destDir" corresponding to the class package structure, if set to "false", classes will be generated in "destDir" ignoring their package. |

createPropertyNames |

boolean |

If set to "true", will generate String Property names. Default is "false" |

Example - a typical class generation scenario, where pairs of classes are generated with default Maven source destination and superclass package:

<plugin>

<groupId>org.apache.cayenne.plugins</groupId>

<artifactId>cayenne-maven-plugin</artifactId>

<version>4.0.3</version>

<configuration>

<map>${project.basedir}/src/main/resources/my.map.xml</map>

</configuration>

<executions>

<execution>

<goals>

<goal>cgen</goal>

</goals>

</execution>

</executions>

</plugin>cdbgen

cdbgen is a cayenne-maven-plugin goal that drops and/or generates tables in a database on Cayenne DataMap. By default, it is bound to the pre-integration-test phase.

| Name | Type | Description |

|---|---|---|

map |

File |

DataMap XML file which serves as a source of metadata for class generation. E.g. ${project.basedir}/src/main/resources/my.map.xml

|

dataSource |

XML |

An object that contains Data Source parameters |

| Name | Type | Required | Description |

|---|---|---|---|

driver |

String |

Yes |

A class of JDBC driver to use for the target database. |

url |

String |

Yes |

JDBC URL of a target database. |

username |

String |

No |

Database user name. |

password |

String |

No |

Database user password. |

| Name | Type | Description |

|---|---|---|

adapter |

String |

Java class name implementing org.apache.cayenne.dba.DbAdapter. While this attribute is optional (a generic JdbcAdapter is used if not set), it is highly recommended to specify correct target adapter. |

createFK |

boolean |

Indicates whether cdbgen should create foreign key constraints. Default is "true". |

createPK |

boolean |

Indicates whether cdbgen should create Cayenne-specific auto PK objects. Default is "true". |

createTables |

boolean |

Indicates whether cdbgen should create new tables. Default is "true". |

dropPK |

boolean |

Indicates whether cdbgen should drop Cayenne primary key support objects. Default is "false". |

dropTables |

boolean |

Indicates whether cdbgen should drop the tables before attempting to create new ones. Default is "false". |

Example - creating a DB schema on a local HSQLDB database:

<plugin>

<groupId>org.apache.cayenne.plugins</groupId>

<artifactId>cayenne-maven-plugin</artifactId>

<version>4.0.3</version>

<executions>

<execution>

<configuration>

<map>${project.basedir}/src/main/resources/my.map.xml</map>

<adapter>org.apache.cayenne.dba.hsqldb.HSQLDBAdapter</adapter>

<dataSource>

<url>jdbc:hsqldb:hsql://localhost/testdb</url>

<driver>org.hsqldb.jdbcDriver</driver>

<username>sa</username>

</dataSource>

</configuration>

<goals>

<goal>cdbgen</goal>

</goals>

</execution>

</executions>

</plugin>cdbimport

cdbimport is a cayenne-maven-plugin goal that generates a DataMap based on an existing database schema. By default, it is bound to the generate-sources phase. This allows you to generate your DataMap prior to building your project, possibly followed by "cgen" execution to generate the classes. CDBImport plugin described in details in chapter DB-First Flow

| Name | Type | Required | Description |

|---|---|---|---|

map |

File |

Yes |

DataMap XML file which is the destination of the schema import. Can be an existing file. If this file does not exist, it is created when cdbimport is executed. E.g. |

adapter |

String |

No |

A Java class name implementing org.apache.cayenne.dba.DbAdapter. This attribute is optional. If not specified, AutoAdapter is used, which will attempt to guess the DB type. |

dataSource |

XML |

Yes |

An object that contains Data Source parameters. |

dbimport |

XML |

No |

An object that contains detailed reverse engineering rules about what DB objects should be processed. For full information about this parameter see DB-First Flow chapter. |

| Name | Type | Required | Description |

|---|---|---|---|

driver |

String |

Yes |

A class of JDBC driver to use for the target database. |

url |

String |

Yes |

JDBC URL of a target database. |

username |

String |

No |

Database user name. |

password |

String |

No |

Database user password. |

| Name | Type | Description |

|---|---|---|

defaultPackage |

String |

A Java package that will be set as the imported DataMap default and a package of all the persistent Java classes. This is a required attribute if the "map" itself does not already contain a default package, as otherwise all the persistent classes will be mapped with no package, and will not compile. |

forceDataMapCatalog |

boolean |

Automatically tagging each DbEntity with the actual DB catalog/schema (default behavior) may sometimes be undesirable. If this is the case then setting |

forceDataMapSchema |

boolean |

Automatically tagging each DbEntity with the actual DB catalog/schema (default behavior) may sometimes be undesirable. If this is the case then setting |

meaningfulPkTables |

String |

A comma-separated list of Perl5 patterns that defines which imported tables should have their primary key columns mapped as ObjAttributes. "*" would indicate all tables. |

String |

The naming strategy used for mapping database names to object entity names. Default is |

|

skipPrimaryKeyLoading |

boolean |

Whether to load primary keys. Default "false". |

skipRelationshipsLoading |

boolean |

Whether to load relationships. Default "false". |

stripFromTableNames |

String |

Regex that matches the part of the table name that needs to be stripped off when generating ObjEntity name. Here are some examples: |

usePrimitives |

boolean |

Whether numeric and boolean data types should be mapped as Java primitives or Java classes. Default is "true", i.e. primitives will be used. |

useJava7Types |

boolean |

Whether |

filters configuration |

XML |

Detailed reverse engineering rules about what DB objects should be processed. For full information about this parameter see DB-First Flow chapter. Here is some simple example: |

Example - loading a DB schema from a local HSQLDB database (essentially a reverse operation compared to the cdbgen example above) :

<plugin>

<groupId>org.apache.cayenne.plugins</groupId>

<artifactId>cayenne-maven-plugin</artifactId>

<version>4.0.3</version>

<executions>

<execution>

<configuration>

<map>${project.basedir}/src/main/resources/my.map.xml</map>

<dataSource>

<url>jdbc:mysql://127.0.0.1/mydb</url>

<driver>com.mysql.jdbc.Driver</driver>

<username>sa</username>

</dataSource>

<dbimport>

<defaultPackage>com.example.cayenne</defaultPackage>

</dbimport>

</configuration>

<goals>

<goal>cdbimport</goal>

</goals>

</execution>

</executions>

</plugin>Gradle Projects

To include Cayenne into your Gradle project you have two options:

-

Simply add Cayenne as a dependency:

compile 'org.apache.cayenne:cayenne-server:4.0.3'-

Or you can use Cayenne Gradle plugin

Gradle Plugin

Cayenne Gradle plugin provides several tasks, such as synching generated Java classes with the mapping or synching mapping with the database. Plugin also provides cayenne extension that have some useful utility methods. Here is example of how to include Cayenne plugin into your project:

buildscript {

// add Maven Central repository

repositories {

mavenCentral()

}

// add Cayenne Gradle Plugin

dependencies {

classpath group: 'org.apache.cayenne.plugins', name: 'cayenne-gradle-plugin', version: '4.0.3'

}

}

// apply plugin

apply plugin: 'org.apache.cayenne'

// set default DataMap

cayenne.defaultDataMap 'datamap.map.xml'

// add Cayenne dependencies to your project

dependencies {

// this is a shortcut for 'org.apache.cayenne:cayenne-server:VERSION_OF_PLUGIN'

compile cayenne.dependency('server')

compile cayenne.dependency('java8')

}cgen

Cgen task generates Java classes based on your DataMap, it has same configuration parameters as in Maven Plugin version, described in Table, “cgen required parameters”.. If you provided default DataMap via cayenne.defaultDataMap, you can skip cgen configuration as default settings will suffice in common case.

Here is how you can change settings of the default cgen task:

cgen {

client = false

mode = 'all'

overwrite = true

createPropertiesNames = true

}And here is example of how to define additional cgen task (e.g. for client classes if you are using ROP):

task clientCgen(type: cayenne.cgen) {

client = true

}cdbimport

This task is for creating and synchronizing your Cayenne model from database schema. Full list of parameters are same as in Maven Plugin version, described in Table, “cdbimport parameters”, with exception that Gradle version will use Groovy instead of XML.

Here is example of configuration for cdbimport task:

cdbimport {

// map can be skipped if it is defined in cayenne.defaultDataMap

map 'datamap.map.xml'

dataSource {

driver 'com.mysql.cj.jdbc.Driver'

url 'jdbc:mysql://127.0.0.1:3306/test?useSSL=false'

username 'root'

password ''

}

dbImport

// additional settings

usePrimitives false

defaultPackage 'org.apache.cayenne.test'

// DB filter configuration

catalog 'catalog-1'

schema 'schema-1'

catalog {

name 'catalog-2'

includeTable 'table0', {

excludeColumns '_column_'

}

includeTables 'table1', 'table2', 'table3'

includeTable 'table4', {

includeColumns 'id', 'type', 'data'

}

excludeTable '^GENERATED_.*'

}

catalog {

name 'catalog-3'

schema {

name 'schema-2'

includeTable 'test_table'

includeTable 'test_table2', {

excludeColumn '__excluded'

}

}

}

includeProcedure 'procedure_test_1'

includeColumns 'id', 'version'

tableTypes 'TABLE', 'VIEW'

}

}cdbgen

Cdbgen task drops and/or generates tables in a database on Cayenne DataMap. Full list of parameters are same as in Maven Plugin version, described in Table , “cdbgen required parameters”

Here is example of how to configure default cdbgen task:

cdbgen {

adapter 'org.apache.cayenne.dba.derby.DerbyAdapter'

dataSource {

driver 'org.apache.derby.jdbc.EmbeddedDriver'

url 'jdbc:derby:build/testdb;create=true'

username 'sa'

password ''

}

dropTables true

dropPk true

createTables true

createPk true

createFk true

}Link tasks to Gradle build lifecycle

With gradle you can easily connect Cayenne tasks to default build lifecycle. Here is short example of how to connect defaut cgen and cdbimport tasks with compileJava task:

cgen.dependsOn cdbimport

compileJava.dependsOn cgen Running cdbimport automatically with build is not always a good choice, e.g. in case of complex model that you need to alter in the Cayenne Modeler after import. |

Ant Projects

Ant tasks are the same as Maven plugin goals described above, namely "cgen", "cdbgen", "cdbimport". Configuration parameters are also similar (except Maven can guess many defaults that Ant can’t). To include Ant tasks in the project, use the following Antlib:

<typedef resource="org/apache/cayenne/tools/antlib.xml">

<classpath>

<fileset dir="lib" >

<include name="cayenne-ant-*.jar" />

<include name="cayenne-cgen-*.jar" />

<include name="cayenne-dbsync-*.jar" />

<include name="cayenne-di-*.jar" />

<include name="cayenne-project-*.jar" />

<include name="cayenne-server-*.jar" />

<include name="commons-collections-*.jar" />

<include name="commons-lang-*.jar" />

<include name="slf4j-api-*.jar" />

<include name="velocity-*.jar" />

<include name="vpp-2.2.1.jar" />

</fileset>

</classpath>

</typedef>cdbimport

This is an Ant counterpart of "cdbimport" goal of cayenne-maven-plugin described above. It has exactly the same properties. Here is a usage example:

<cdbimport map="${context.dir}/WEB-INF/my.map.xml"

driver="com.mysql.jdbc.Driver"

url="jdbc:mysql://127.0.0.1/mydb"

username="sa"

defaultPackage="com.example.cayenne"/>2.2. Starting Cayenne

Starting and Stopping ServerRuntime

In runtime Cayenne is accessed via org.apache.cayenne.configuration.server.ServerRuntime. ServerRuntime is created by calling a convenient builder:

ServerRuntime runtime = ServerRuntime.builder()

.addConfig("com/example/cayenne-project.xml")

.build();The parameter you pass to the builder is a location of the main project file. Location is a '/'-separated path (same path separator is used on UNIX and Windows) that is resolved relative to the application classpath. The project file can be placed in the root package or in a subpackage (e.g. in the code above it is in "com/example" subpackage).

ServerRuntime encapsulates a single Cayenne stack. Most applications will just have one ServerRuntime using it to create as many ObjectContexts as needed, access the Dependency Injection (DI) container and work with other Cayenne features. Internally ServerRuntime is just a thin wrapper around the DI container. Detailed features of the container are discussed in Customizing Cayenne Runtime chapter. Here we’ll just show an example of how an application might turn on external transactions:

Module extensions = binder ->

ServerModule.contributeProperties(binder).put(Constants.SERVER_EXTERNAL_TX_PROPERTY, "true");

ServerRuntime runtime = ServerRuntime.builder()

.addConfig("com/example/cayenne-project.xml")

.addModule(extensions)

.build();It is a good idea to shut down the runtime when it is no longer needed, usually before the application itself is shutdown:

runtime.shutdown();When a runtime object has the same scope as the application, this may not be always necessary, however in some cases it is essential, and is generally considered a good practice. E.g. in a web container hot redeploy of a webapp will cause resource leaks and eventual OutOfMemoryError if the application fails to shutdown CayenneRuntime.

Merging Multiple Projects

ServerRuntime requires at least one mapping project to run. But it can also take multiple projects and merge them together in a single configuration. This way different parts of a database can be mapped independently from each other (even by different software providers), and combined in runtime when assembling an application. Doing it is as easy as passing multiple project locations to ServerRuntime builder:

ServerRuntime runtime = ServerRuntime.builder()

.addConfig("com/example/cayenne-project.xml")

.addConfig("org/foo/cayenne-library1.xml")

.addConfig("org/foo/cayenne-library2.xml")

.build();When the projects are merged, the following rules are applied:

-

The order of projects matters during merge. If there are two conflicting metadata objects belonging to two projects, an object from the last project takes precedence over the object from the first one. This makes possible to override pieces of metadata. This is also similar to how DI modules are merged in Cayenne.

-

Runtime DataDomain name is set to the name of the last project in the list.

-

Runtime DataDomain properties are the same as the properties of the last project in the list. I.e. properties are not merged to avoid invalid combinations and unexpected runtime behavior.

-

If there are two or more DataMaps with the same name, only one DataMap is used in the merged project, the rest are discarded. Same precedence rules apply - DataMap from the project with the highest index in the project list overrides all other DataMaps with the same name.

-

If there are two or more DataNodes with the same name, only one DataNodes is used in the merged project, the rest are discarded. DataNode coming from project with the highest index in the project list is chosen per precedence rule above.

-

There is a notion of "default" DataNode. After the merge if any DataMaps are not explicitly linked to DataNodes, their queries will be executed via a default DataNode. This makes it possible to build mapping "libraries" that are only associated with a specific database in runtime. If there’s only one DataNode in the merged project, it will be automatically chosen as default. A possible way to explicitly designate a specific node as default is to override

DataDomainProvider.createAndInitDataDomain().

Web Applications

Web applications can use a variety of mechanisms to configure and start the "services" they need, Cayenne being one of such services. Configuration can be done within standard Servlet specification objects like Servlets, Filters, or ServletContextListeners, or can use Spring, JEE CDI, etc. This is a user’s architectural choice and Cayenne is agnostic to it and will happily work in any environment. As described above, all that is needed is to create an instance of ServerRuntime somewhere and provide the application code with means to access it. And shut it down when the application ends to avoid container leaks.

Still Cayenne includes a piece of web app configuration code that can assist in quickly setting up simple Cayenne-enabled web applications. We are talking about CayenneFilter. It is declared in web.xml:

<web-app>

...

<filter>

<filter-name>cayenne-project</filter-name>

<filter-class>org.apache.cayenne.configuration.web.CayenneFilter</filter-class>

</filter>

<filter-mapping>

<filter-name>cayenne-project</filter-name>

<url-pattern>/*</url-pattern>

</filter-mapping>

...

</web-app>When started by the web container, it creates a instance of ServerRuntime and stores it in the ServletContext. Note that the name of Cayenne XML project file is derived from the "filter-name". In the example above CayenneFilter will look for an XML file "cayenne-project.xml". This can be overridden with "configuration-location" init parameter.

When the application runs, all HTTP requests matching the filter url-pattern will have access to a session-scoped ObjectContext like this:

ObjectContext context = BaseContext.getThreadObjectContext();Of course the ObjectContext scope, and other behavior of the Cayenne runtime can be customized via dependency injection. For this another filter init parameter called "extra-modules" is used. "extra-modules" is a comma or space-separated list of class names, with each class implementing Module interface. These optional custom modules are loaded after the the standard ones, which allows users to override all standard definitions.

For those interested in the DI container contents of the runtime created by CayenneFilter, it is the same ServerRuntime as would’ve been created by other means, but with an extra org.apache.cayenne.configuration.web.WebModule module that provides org.apache.cayenne.configuration.web.RequestHandler service. This is the service to override in the custom modules if you need to provide a different ObjectContext scope, etc.

| You should not think of CayenneFilter as the only way to start and use Cayenne in a web application. In fact CayenneFilter is entirely optional. Use it if you don’t have any special design for application service management. If you do, simply integrate Cayenne into that design. |

2.3. Persistent Objects and ObjectContext

ObjectContext

ObjectContext is an interface that users normally work with to access the database. It provides the API to execute database operations and to manage persistent objects. A context is obtained from the ServerRuntime:

ObjectContext context = runtime.newContext();The call above creates a new instance of ObjectContext that can access the database via this runtime. ObjectContext is a single "work area" in Cayenne, storing persistent objects. ObjectContext guarantees that for each database row with a unique ID it will contain at most one instance of an object, thus ensuring object graph consistency between multiple selects (a feature called "uniquing"). At the same time different ObjectContexts will have independent copies of objects for each unique database row. This allows users to isolate object changes from one another by using separate ObjectContexts.

These properties directly affect the strategies for scoping and sharing (or not sharing) ObjectContexts. Contexts that are only used to fetch objects from the database and whose objects are never modified by the application can be shared between mutliple users (and multiple threads). Contexts that store modified objects should be accessed only by a single user (e.g. a web application user might reuse a context instance between multiple web requests in the same HttpSession, thus carrying uncommitted changes to objects from request to request, until he decides to commit or rollback them). Even for a single user it might make sense to use mutliple ObjectContexts (e.g. request-scoped contexts to allow concurrent requests from the browser that change and commit objects independently).

ObjectContext is serializable and does not permanently hold to any of the application resources. So it does not have to be closed. If the context is not used anymore, it should simply be allowed to go out of scope and get garbage collected, just like any other Java object.

Persistent Object and its Lifecycle

Cayenne can persist Java objects that implement org.apache.cayenne.Persistent interface. Generally persistent classes are generated from the model as described above, so users do not have to worry about superclass and property implementation details.

Persistent interface provides access to 3 persistence-related properties - objectId, persistenceState and objectContext. All 3 are initialized by Cayenne runtime framework. Application code should not attempt to change them. However it is allowed to read them, which provides valuable runtime information. E.g. ObjectId can be used for quick equality check of 2 objects, knowing persistence state would allow highlighting changed objects, etc.

Each persistent object belongs to a single ObjectContext, and can be in one of the following persistence states (as defined in org.apache.cayenne.PersistenceState) :

TRANSIENT |

The object is not registered with an ObjectContext and will not be persisted. |

NEW |

The object is freshly registered in an ObjectContext, but has not been saved to the database yet and there is no matching database row. |

COMMITED |

The object is registered in an ObjectContext, there is a row in the database corresponding to this object, and the object state corresponds to the last known state of the matching database row. |

MODIFIED |

The object is registered in an ObjectContext, there is a row in the database corresponding to this object, but the object in-memory state has diverged from the last known state of the matching database row. |

HOLLOW |

The object is registered in an ObjectContext, there is a row in the database corresponding to this object, but the object state is unknown. Whenever an application tries to access a property of such object, Cayenne attempts reading its values from the database and "inflate" the object, turning it to COMMITED. |

DELETED |

The object is registered in an ObjectContext and has been marked for deletion in-memory. The corresponding row in the database will get deleted upon ObjectContext commit, and the object state will be turned into TRANSIENT. |

ObjectContext Persistence API

One of the first things users usually want to do with an ObjectContext is to select some objects from a database:

List<Artist> artists = ObjectSelect.query(Artist.class)

.select(context);We’ll discuss queries in some detail in the Queries chapter. The example above is self-explanatory - we create a ObjectSelect that matches all Artist objects present in the database, and then use select to get the result.

Some queries can be quite complex, returning multiple result sets or even updating the database. For such queries ObjectContext provides performGenericQuery() method. While not commonly-used, it is nevertheless important in some situations. E.g.:

Collection<Query> queries = ... // multiple queries that need to be run together

QueryChain query = new QueryChain(queries);

QueryResponse response = context.performGenericQuery(query);An application might modify selected objects. E.g.:

Artist selectedArtist = artists.get(0);

selectedArtist.setName("Dali");The first time the object property is changed, the object’s state is automatically set to MODIFIED by Cayenne. Cayenne tracks all in-memory changes until a user calls commitChanges:

context.commitChanges();At this point all in-memory changes are analyzed and a minimal set of SQL statements is issued in a single transaction to synchronize the database with the in-memory state. In our example commitChanges commits just one object, but generally it can be any number of objects.

If instead of commit, we wanted to reset all changed objects to the previously committed state, we’d call rollbackChanges instead:

context.rollbackChanges();newObject method call creates a persistent object and sets its state to NEW:

Artist newArtist = context.newObject(Artist.class);

newArtist.setName("Picasso");It will only exist in memory until commitChanges is issued. On commit Cayenne might generate a new primary key (unless a user set it explicitly, or a PK was inferred from a relationship) and issue an INSERT SQL statement to permanently store the object.

deleteObjects method takes one or more Persistent objects and marks them as DELETED:

context.deleteObjects(artist1);

context.deleteObjects(artist2, artist3, artist4);Additionally deleteObjects processes all delete rules modeled for the affected objects. This may result in implicitly deleting or modifying extra related objects. Same as insert and update, delete operations are sent to the database only when commitChanges is called. Similarly rollbackChanges will undo the effect of newObject and deleteObjects.

localObject returns a copy of a given persistent object that is local to a given ObjectContext:

Since an application often works with more than one context, localObject is a rather common operation. E.g. to improve performance a user might utilize a single shared context to select and cache data, and then occasionally transfer some selected objects to another context to modify and commit them:

ObjectContext editingContext = runtime.newContext();

Artist localArtist = editingContext.localObject(artist);Often an application needs to inspect mapping metadata. This information is stored in the EntityResolver object, accessible via the ObjectContext:

EntityResolver resolver = objectContext.getEntityResolver();Here we discussed the most commonly used subset of the ObjectContext API. There are other useful methods, e.g. those allowing to inspect registered objects state in bulk, etc. Check the latest JavaDocs for details.

Cayenne Helper Class

There is a useful helper class called Cayenne (fully-qualified name org.apache.cayenne.Cayenne) that builds on ObjectContext API to provide a number of very common operations. E.g. get a primary key (most entities do not model PK as an object property) :

long pk = Cayenne.longPKForObject(artist);It also provides the reverse operation - finding an object given a known PK:

Artist artist = Cayenne.objectForPK(context, Artist.class, 34579);If a query is expected to return 0 or 1 object, Cayenne helper class can be used to find this object. It throws an exception if more than one object matched the query:

Artist artist = (Artist) Cayenne.objectForQuery(context, new SelectQuery(Artist.class));Feel free to explore Cayenne class API for other useful methods.

ObjectContext Nesting

In all the examples shown so far an ObjectContext would directly connect to a database to select data or synchronize its state (either via commit or rollback). However another context can be used in all these scenarios instead of a database. This concept is called ObjectContext "nesting". Nesting is a parent/child relationship between two contexts, where child is a nested context and selects or commits its objects via a parent.

Nesting is useful to create isolated object editing areas (child contexts) that need to all be committed to an intermediate in-memory store (parent context), or rolled back without affecting changes already recorded in the parent. Think cascading GUI dialogs, or parallel AJAX requests coming to the same session.

In theory Cayenne supports any number of nesting levels, however applications should generally stay with one or two, as deep hierarchies will most certainly degrade the performance of the deeply nested child contexts. This is due to the fact that each context in a nesting chain has to update its own objects during most operations.

Cayenne ROP is an extreme case of nesting when a child context is located in a separate JVM and communicates with its parent via a web service. ROP is discussed in details in the following chapters. Here we concentrate on the same-VM nesting.

To create a nested context, use an instance of ServerRuntime, passing it the desired parent:

ObjectContext parent = runtime.newContext();

ObjectContext nested = runtime.newContext(parent);From here a nested context operates just like a regular context (you can perform queries, create and delete objects, etc.). The only difference is that commit and rollback operations can either be limited to synchronization with the parent, or cascade all the way to the database:

// merges nested context changes into the parent context

nested.commitChangesToParent();

// regular 'commitChanges' cascades commit through the chain

// of parent contexts all the way to the database

nested.commitChanges();// unrolls all local changes, getting context in a state identical to parent

nested.rollbackChangesLocally();

// regular 'rollbackChanges' cascades rollback through the chain of contexts

// all the way to the topmost parent

nested.rollbackChanges();Generic Persistent Objects

As described in the CayenneModeler chapter, Cayenne supports mapping of completely generic classes to specific entities. Although for conveniece most applications should stick with entity-specific class mappings, the generic feature offers some interesting possibilities, such as creating mappings completely on the fly in a running application, etc.

Generic objects are first class citizens in Cayenne, and all common persistent operations apply to them as well. There are some pecularities however, described below.

When creating a new generic object, either cast your ObjectContext to DataContext (that provides newObject(String) API), or provide your object with an explicit ObjectId:

DataObject generic = ((DataContext) context).newObject("GenericEntity");DataObject generic = new CayenneDataObject();

generic.setObjectId(new ObjectId("GenericEntity"));

context.registerNewObject(generic);SelectQuery for generic object should be created passing entity name String in constructor, instead of a Java class:

SelectQuery query = new SelectQuery("GenericEntity");Use DataObject API to access and modify properties of a generic object:

String name = (String) generic.readProperty("name");

generic.writeProperty("name", "New Name");This is how an application can obtain entity name of a generic object:

String entityName = generic.getObjectId().getEntityName();Transactions

Considering how much attention is given to managing transactions in most other ORMs, transactions have been conspicuously absent from the ObjectContext discussion till now. The reason is that transactions are seamless in Cayenne in all but a few special cases. ObjectContext is an in-memory container of objects that is disconnected from the database, except when it needs to run an operation. So it does not care about any surrounding transaction scope. Sure enough all database operations are transactional, so when an application does a commit, all SQL execution is wrapped in a database transaction. But this is done behind the scenes and is rarely a concern to the application code.

Two cases where transactions need to be taken into consideration are container- and application-managed transactions.

If you are using Spring, EJB or another environment that manages transactions, you’ll likely need to switch Cayenne runtime into "external transactions mode". This is done by setting DI configuration property defined in Constants.SERVER_EXTERNAL_TX_PROPERTY (see Appendix A). If this property is set to "true", Cayenne assumes that JDBC Connections obtained by runtime whenever that might happen are all coming from a transactional DataSource managed by the container. In this case Cayenne does not attempt to commit or rollback the connections, leaving it up to the container to do that when appropriate.

In the second scenario, an application might need to define its own transaction scope that spans more than one Cayenne operation. E.g. two sequential commits that need to be rolled back together in case of failure. This can be done via ServerRuntime.performInTransaction method:

Integer result = runtime.performInTransaction(() -> {

// commit one or more contexts

context1.commitChanges();

context2.commitChanges();

....

// after changing some objects in context1, commit again

context1.commitChanges();

....

// return an arbitrary result or null if we don't care about the result

return 5;

});When inside a transaction, current thread Transaction object can be accessed via a static method:

Transaction tx = BaseTransaction.getThreadTransaction();2.4. Expressions

Expressions Overview

Cayenne provides a simple yet powerful object-based expression language. The most common use of expressions are to build qualifiers and orderings of queries that are later converted to SQL by Cayenne and to evaluate in-memory against specific objects (to access certain values in the object graph or to perform in-memory object filtering and sorting). Cayenne provides API to build expressions in the code and a parser to create expressions from strings.

Path Expressions

Before discussing how to build expressions, it is important to understand one group of expressions widely used in Cayenne - path expressions. There are two types of path expressions - object and database, used for navigating graphs of connected objects or joined DB tables respectively. Object paths are much more commonly used, as after all Cayenne is supposed to provide a degree of isolation of the object model from the database. However database paths are helpful in certain situations. General structure of path expressions is the following:

[db:]segment[+][.segment[+]...]

-

db:is an optional prefix indicating that the following path is a DB path. Otherwise it is an object path. -

segmentis a name of a property (relationship or attribute in Cayenne terms) in the path. Path must have at least one segment; segments are separated by dot ("."). -

+An "OUTER JOIN" path component. Currently "+" only has effect when translated to SQL as OUTER JOIN. When evaluating expressions in memory, it is ignored.

An object path expression represents a chain of property names rooted in a certain (unspecified during expression creation) object and "navigating" to its related value. E.g. a path expression "artist.name" might be a property path starting from a Painting object, pointing to the related Artist object, and then to its name attribute. A few more examples:

-

name- can be used to navigate (read) the "name" property of a Person (or any other type of object that has a "name" property). -

artist.exhibits.closingDate- can be used to navigate to a closing date of any of the exhibits of a Painting’s Artist object. -

artist.exhibits+.closingDate- same as the previous example, but when translated into SQL, an OUTER JOIN will be used for "exhibits".

Similarly a database path expression is a dot-separated path through DB table joins and columns. In Cayenne joins are mapped as DbRelationships with some symbolic names (the closest concept to DbRelationship name in the DB world is a named foreign key constraint. But DbRelationship names are usually chosen arbitrarily, without regard to constraints naming or even constraints presence). A database path therefore might look like this - db:dbrelationshipX.dbrelationshipY.COLUMN_Z. More specific examples:

-

db:NAME- can be used to navigate to the value of "NAME" column of some unspecified table. -

db:artist.artistExhibits.exhibit.CLOSING_DATE- can be used to match a closing date of any of the exhibits of a related artist record.

Cayenne supports "aliases" in path Expressions. E.g. the same expression can be written using explicit path or an alias:

-

artist.exhibits.closingDate- full path -

e.closingDate- aliaseis used forartist.exhibits.

SelectQuery using the second form of the path expression must be made aware of the alias via SelectQuery.aliasPathSplits(..), otherwise an Exception will be thrown. The main use of aliases is to allow users to control how SQL joins are generated if the same path is encountered more than once in any given Expression. Each alias for any given path would result in a separate join. Without aliases, a single join will be used for a group of matching paths.

Creating Expressions from Strings

While in most cases users are likely to rely on API from the following section for expression creation, we’ll start by showing String expressions, as this will help to understand the semantics. A Cayenne expression can be represented as a String, which can be converted to an expression object using ExpressionFactory.exp static method. Here is an example:

String expString = "name like 'A%' and price < 1000";

Expression exp = ExpressionFactory.exp(expString);This particular expression may be used to match Paintings whose names that start with "A" and whose price is less than $1000. While this example is pretty self-explanatory, there are a few points worth mentioning. "name" and "price" here are object paths discussed earlier. As always, paths themselves are not attached to a specific root entity and can be applied to any entity that has similarly named attributes or relationships. So when we are saying that this expression "may be used to match Paintings", we are implying that there may be other entities, for which this expression is valid. Now the expression details…

Character constants that are not paths or numeric values should be enclosed in single or double quotes. Two of the expressions below are equivalent:

name = 'ABC'

// double quotes are escaped inside Java Strings of course

name = \"ABC\"Case sensitivity. Expression operators are case sensitive and are usually lowercase. Complex words follow the Java camel-case style:

// valid

name likeIgnoreCase 'A%'

// invalid - will throw a parse exception

name LIKEIGNORECASE 'A%'Grouping with parenthesis:

value = (price + 250.00) * 3Path prefixes. Object expressions are unquoted strings, optionally prefixed by "obj:" (usually they are not prefixed at all actually). Database expressions are always prefixed with "db:". A special kind of prefix, not discussed yet is "enum:" that prefixes an enumeration constant:

// object path

name = 'Salvador Dali'

// same object path - a rarely used form

obj:name = 'Salvador Dali'

// multi-segment object path

artist.name = 'Salvador Dali'

// db path

db:NAME = 'Salvador Dali'

// enumeration constant

name = enum:org.foo.EnumClass.VALUE1Binary conditions are expressions that contain a path on the left, a value on the right, and some operation between them, such as equals, like, etc. They can be used as qualifiers in SelectQueries:

name like 'A%'Parameters. Expressions can contain named parameters (names that start with "$") that can be substituted with values either by name or by position. Parameterized expressions allow to create reusable expression templates. Also if an Expression contains a complex object that doesn’t have a simple String representation (e.g. a Date, a DataObject, an ObjectId), parameterizing such expression is the only way to represent it as String. Here are the examples of both positional and named parameter bindings:

Expression template = ExpressionFactory.exp("name = $name");

...

// name binding

Map p1 = Collections.singletonMap("name", "Salvador Dali");

Expression qualifier1 = template.params(p1);

...

// positional binding

Expression qualifier2 = template.paramsArray("Monet");Positional binding is usually shorter. You can pass positional bindings to the "exp(..)" factory method (its second argument is a params vararg):

Expression qualifier = ExpressionFactory.exp("name = $name", "Monet");In parameterized expressions with LIKE clause, SQL wildcards must be part of the values in the Map and not the expression string itself:

Expression qualifier = ExpressionFactory.exp("name like $name", "Salvador%");When matching on a relationship, the value parameter must be either a Persistent object, an org.apache.cayenne.ObjectId, or a numeric ID value (for single column IDs). E.g.:

Artist dali = ... // asume we fetched this one already

Expression qualifier = ExpressionFactory.exp("artist = $artist", dali);When using positional binding, Cayenne would expect values for all parameters to be present. Binding by name offers extra flexibility: subexpressions with uninitialized parameters are automatically pruned from the expression. So e.g. if certain parts of the expression criteria are not provided to the application, you can still build a valid expression:

Expression template = ExpressionFactory.exp("name like $name and dateOfBirth > $date");

...

Map p1 = Collections.singletonMap("name", "Salvador%");

Expression qualifier1 = template.params(p1);

// "qualifier1" is now "name like 'Salvador%'".

// 'dateOfBirth > $date' condition was pruned, as no value was specified for

// the $date parameterNull handling. Handling of Java nulls as operands is no different from normal values. Instead of using special conditional operators, like SQL does (IS NULL, IS NOT NULL), "=" and "!=" expressions are used directly with null values. It is up to Cayenne to translate expressions with nulls to the valid SQL.

| A formal definition of the expression grammar is provided in Appendix C |

Creating Expressions via API

Creating expressions from Strings is a powerful and dynamic approach, however a safer alternative is to use Java API. It provides compile-time checking of expressions validity. The API in question is provided by ExpressionFactory class (that we’ve seen already), Property class and Expression class itself. ExpressionFactory contains a number of self-explanatory static methods that can be used to build expressions. E.g.:

// String expression: name like 'A%' and price < 1000

Expression e1 = ExpressionFactory.likeExp("name", "A%");

Expression e2 = ExpressionFactory.lessExp("price", 1000);

Expression finalExp = e1.andExp(e2);| The last line in the example above shows how to create a new expression by "chaining" two other expressions. A common error when chaining expressions is to assume that "andExp" and "orExp" append another expression to the current expression. In fact a new expression is created. I.e. Expression API treats existing expressions as immutable. |

As discussed earlier, Cayenne supports aliases in path Expressions, allowing to control how SQL joins are generated if the same path is encountered more than once in the same Expression. Two ExpressionFactory methods allow to implicitly generate aliases to "split" match paths into individual joins if needed:

Expression matchAllExp(String path, Collection values)

Expression matchAllExp(String path, Object... values)"Path" argument to both of these methods can use a split character (a pipe symbol '|') instead of dot to indicate that relationship following a path should be split into a separate set of joins, one per collection value. There can only be one split at most in any given path. Split must always precede a relationship. E.g. "|exhibits.paintings", "exhibits|paintings", etc. Internally Cayenne would generate distinct aliases for each of the split expressions, forcing separate joins.

While ExpressionFactory is pretty powerful, there’s an even easier way to create expression using static Property objects generated by Cayenne for each persistent class. Some examples:

// Artist.NAME is generated by Cayenne and has a type of Property<String>

Expression e1 = Artist.NAME.eq("Pablo");

// Chaining multiple properties into a path..

// Painting.ARTIST is generated by Cayenne and has a type of Property<Artist>

Expression e2 = Painting.ARTIST.dot(Artist.NAME).eq("Pablo");Property objects provide the API mostly analogius to ExpressionFactory, though it is significantly shorter and is aware of the value types. It provides compile-time checks of both property names and types of arguments in conditions. We will use Property-based API in further examples.

Evaluating Expressions in Memory

When used in a query, an expression is converted to SQL WHERE clause (or ORDER BY clause) by Cayenne during query execution. Thus the actual evaluation against the data is done by the database engine. However the same expressions can also be used for accessing object properties, calculating values, in-memory filtering.

Checking whether an object satisfies an expression:

Expression e = Artist.NAME.in("John", "Bob");

Artist artist = ...

if(e.match(artist)) {

...

}Reading property value:

String name = Artist.NAME.path().evaluate(artist);Filtering a list of objects:

Expression e = Artist.NAME.in("John", "Bob");

List<Artist> unfiltered = ...

List<Artist> filtered = e.filterObjects(unfiltered);| Current limitation of in-memory expressions is that no collections are permitted in the property path. |

Translating Expressions to EJBQL

EJBQL is a textual query language that can be used with Cayenne. In some situations, it is convenient to be able to convert Expression instances into EJBQL. Expressions support this conversion. An example is shown below.

String serial = ...

Expression e = Pkg.SERIAL.eq(serial);

List<Object> params = new ArrayList<Object>();

EJBQLQuery query = new EJBQLQuery("SELECT p FROM Pkg p WHERE " + e.toEJBQL(params,"p");

for(int i=0;i<params.size();i++) {

query.setParameter(i+1, params.get(i));

}This would be equivalent to the following purely EJBQL querying logic;

EJBQLQuery query = new EJBQLQuery("SELECT p FROM Pkg p WHERE p.serial = ?1");

query.setParameter(1,serial);2.5. Orderings

An Ordering object defines how a list of objects should be ordered. Orderings are essentially path expressions combined with a sorting strategy. Creating an Ordering:

Ordering o = new Ordering(Painting.NAME_PROPERTY, SortOrder.ASCENDING);Like expressions, orderings are translated into SQL as parts of queries (and the sorting occurs in the database). Also like expressions, orderings can be used in memory, naturally - to sort objects:

Ordering o = new Ordering(Painting.NAME_PROPERTY, SortOrder.ASCENDING_INSENSITIVE);

List<Painting> list = ...

o.orderList(list);Note that unlike filtering with Expressions, ordering is performed in-place. This list object is reordered and no new list is created.

2.6. Queries

Queries are Java objects used by the application to communicate with the database. Cayenne knows how to translate queries into SQL statements appropriate for a particular database engine. Most often queries are used to find objects matching certain criteria, but there are other types of queries too. E.g. those allowing to run native SQL, call DB stored procedures, etc. When committing objects, Cayenne itself creates special queries to insert/update/delete rows in the database.

There is a number of built-in queries in Cayenne, described later in this chapter. Most of the newer queries use fluent API and can be created and executed as easy-to-read one-liners. Users can define their own query types to abstract certain DB interactions that for whatever reason can not be adequately described by the built-in set.

Queries can be roughly categorized as "object" and "native". Object queries (most notably ObjectSelect, SelectById, and EJBQLQuery) are built with abstractions originating in the object model (the "object" side in the "object-relational" divide). E.g. ObjectSelect is assembled from a Java class of the objects to fetch, a qualifier expression, orderings, etc. - all of this expressed in terms of the object model.

Native queries describe a desired DB operation as SQL code (SQLSelect, SQLTemplate query) or a reference to a stored procedure (ProcedureQuery), etc. The results of native queries are usually presented as Lists of Maps, with each map representing a row in the DB (a term "data row" is often used to describe such a map). They can potentially be converted to objects, however it may take a considerable effort to do so. Native queries are also less (if at all) portable across databases than object queries.

ObjectSelect

Selecting objects

| ObjectSelect supersedes older SelectQuery. SelectQuery is still available and supported, but will be deprecated in the future. |

ObjectSelect is the most commonly used query in Cayenne applications. This may be the only query you will ever need. It returns a list of persistent objects (or data rows) of a certain type specified in the query:

List<Artist> objects = ObjectSelect.query(Artist.class).select(context);This returned all rows in the "ARTIST" table. If the logs were turned on, you might see the following SQL printed:

INFO: SELECT t0.DATE_OF_BIRTH, t0.NAME, t0.ID FROM ARTIST t0 INFO: === returned 5 row. - took 5 ms.

This SQL was generated by Cayenne from the ObjectSelect above. ObjectSelect can have a qualifier to select only the data matching specific criteria. Qualifier is simply an Expression (Expressions where discussed in the previous chapter), appended to the query using "where" method. If you only want artists whose name begins with 'Pablo', you might use the following qualifier expression:

List<Artist> objects = ObjectSelect.query(Artist.class)

.where(Artist.NAME.like("Pablo%"))

.select(context);The SQL will look different this time:

INFO: SELECT t0.DATE_OF_BIRTH, t0.NAME, t0.ID FROM ARTIST t0 WHERE t0.NAME LIKE ? [bind: 1->NAME:'Pablo%'] INFO: === returned 1 row. - took 6 ms.

ObjectSelect allows to assemble qualifier from parts, using "and" and "or" method to chain then together:

List<Artist> objects = ObjectSelect.query(Artist.class)

.where(Artist.NAME.like("A%"))

.and(Artist.DATE_OF_BIRTH.gt(someDate)

.select(context);To order the results of ObjectSelect, one or more orderings can be applied:

List<Artist> objects = ObjectSelect.query(Artist.class)

.orderBy(Artist.DATE_OF_BIRTH.desc())

.orderBy(Artist.NAME.asc())

.select(context);There’s a number of other useful methods in ObjectSelect that define what to select and how to optimize database interaction (prefetching, caching, fetch offset and limit, pagination, etc.). Some of them are discussed in separate chapters on caching and performance optimization. Others are fairly self-explanatory. Please check the API docs for the full extent of the ObjectSelect features.

Selecting individual columns

ObjectSelect query can be used to fetch individual properties of objects via type-safe API:

List<String> names = ObjectSelect

.columnQuery(Artist.class, Artist.ARTIST_NAME)

.select(context);And here is an example of selecting several properties. The result is a list of Object[]:

List<Object[]> nameAndDate = ObjectSelect

.columnQuery(Artist.class, Artist.ARTIST_NAME, Artist.DATE_OF_BIRTH)

.select(context);Selecting using aggregate functions

ObjectSelect query supports usage of aggregate functions. Most common variant of aggregation is selecting count of records, this can be done really easy:

long count = ObjectSelect.query(Artist.class).selectCount(context);But you can use aggregates in more cases, even combine selecting individual properties and aggregates:

// this is artificial property signaling that we want to get full object

Property<Artist> artistProperty = Property.createSelf(Artist.class);

List<Object[]> artistAndPaintingCount = ObjectSelect.columnQuery(Artist.class, artistProperty, Artist.PAINTING_ARRAY.count())

.where(Artist.ARTIST_NAME.like("a%"))

.having(Artist.PAINTING_ARRAY.count().lt(5L))

.orderBy(Artist.PAINTING_ARRAY.count().desc(), Artist.ARTIST_NAME.asc())

.select(context);

for(Object[] next : artistAndPaintingCount) {

Artist artist = (Artist)next[0];

long paintings = (Long)next[1];

System.out.println(artist.getArtistName() + " have " + paintings + " paintings");

}Here is generated SQL for this query:

SELECT DISTINCT t0.ARTIST_NAME, t0.DATE_OF_BIRTH, t0.ARTIST_ID, COUNT(t1.PAINTING_ID)

FROM ARTIST t0 JOIN PAINTING t1 ON (t0.ARTIST_ID = t1.ARTIST_ID)

WHERE t0.ARTIST_NAME LIKE ?

GROUP BY t0.ARTIST_NAME, t0.ARTIST_ID, t0.DATE_OF_BIRTH

HAVING COUNT(t1.PAINTING_ID) < ?

ORDER BY COUNT(t1.PAINTING_ID) DESC, t0.ARTIST_NAMEEJBQLQuery

As soon as all of the EJBQLQuery capabilities become available in ObjectSelect, we are planning to deprecate EJBQLQuery. |

EJBQLQuery was created as a part of an experiment in adopting some of Java Persistence API (JPA) approaches in Cayenne. It is a parameterized object query that is created from query String. A String used to build EJBQLQuery follows JPQL (JPA Query Language) syntax:

EJBQLQuery query = new EJBQLQuery("select a FROM Artist a");JPQL details can be found in any JPA manual. Here we’ll focus on how this fits into Cayenne and what are the differences between EJBQL and other Cayenne queries.

Although most frequently EJBQLQuery is used as an alternative to ObjectSelect, there are also DELETE and UPDATE varieties available.

| DELETE and UPDATE do not change the state of objects in the ObjectContext. They are run directly against the database instead. |

EJBQLQuery select =

new EJBQLQuery("select a FROM Artist a WHERE a.name = 'Salvador Dali'");

List<Artist> artists = context.performQuery(select);EJBQLQuery delete = new EJBQLQuery("delete from Painting");

context.performGenericQuery(delete);EJBQLQuery update =

new EJBQLQuery("UPDATE Painting AS p SET p.name = 'P2' WHERE p.name = 'P1'");

context.performGenericQuery(update);In most cases ObjectSelect is preferred to EJBQLQuery, as it is API-based, and provides you with better compile-time checks. However sometimes you may want a completely scriptable object query. This is when you might prefer EJBQL. A more practical reason for picking EJBQL over ObjectSelect though is that the former offers a few extra capabilities, such as subqueries.

Just like ObjectSelect EJBQLQuery can return a List of Object[] elements, where each entry in an array is either a DataObject or a scalar, depending on the query SELECT clause.

EJBQLQuery query = new EJBQLQuery("select a, COUNT(p) FROM Artist a JOIN a.paintings p GROUP BY a");

List<Object[]> result = context.performQuery(query);

for(Object[] artistWithCount : result) {

Artist a = (Artist) artistWithCount[0];

int hasPaintings = (Integer) artistWithCount[1];

}A result can also be a list of scalars:

EJBQLQuery query = new EJBQLQuery("select a.name FROM Artist a");

List<String> names = context.performQuery(query);EJBQLQuery supports an "IN" clause with three different usage-patterns. The following example would require three individual positional parameters (named parameters could also have been used) to be supplied.

select p from Painting p where p.paintingTitle in (?1,?2,?3)The following example requires a single positional parameter to be supplied. The parameter can be any concrete implementation of the java.util.Collection interface such as java.util.List or java.util.Set.

select p from Painting p where p.paintingTitle in ?1The following example is functionally identical to the one prior.

select p from Painting p where p.paintingTitle in (?1)It is possible to convert an Expression object used with a ObjectSelect to EJBQL. Use the Expression#appendAsEJBQL methods for this purpose.

While Cayenne Expressions discussed previously can be thought of as identical to JPQL WHERE clause, and indeed they are very close, there are a few notable differences:

-

Null handling: SelectQuery would translate the expressions matching NULL values to the corresponding "X IS NULL" or "X IS NOT NULL" SQL syntax. EJBQLQuery on the other hand requires explicit "IS NULL" (or "IS NOT NULL") syntax to be used, otherwise the generated SQL will look like "X = NULL" (or "X <> NULL"), which will evaluate differently.

-

Expression Parameters: SelectQuery uses "$" to denote named parameters (e.g. "$myParam"), while EJBQL uses ":" (e.g. ":myParam"). Also EJBQL supports positional parameters denoted by the question mark: "?3".

SelectById

This query allows to search objects by their ID. It’s introduced in Cayenne 4.0 and uses new "fluent" API same as ObjectSelect query.

Here is example of how to use it:

Artist artistWithId1 = SelectById.query(Artist.class, 1)

.prefetch(Artist.PAINTING_ARRAY.joint())

.localCache()

.selectOne(context);SQLSelect and SQLExec

SQLSelect and SQLExec are essentially a "fluent" versions of older SQLTemplate query. SQLSelect can be used (as name suggests) to select custom data in form of entities, separate columns or collection of DataRow. SQLExec is designed to just execute any raw SQL code (e.g. updates, deletes, DDLs, etc.) This queries support all directives described in SQLTemplate section.

Here is example of how to use SQLSelect:

// Selecting objects

List<Painting> paintings = SQLSelect

.query(Painting.class, "SELECT * FROM PAINTING WHERE PAINTING_TITLE LIKE #bind($title)")

.params("title", "painting%")

.upperColumnNames()

.localCache()

.limit(100)

.select(context);

// Selecting scalar values

List<String> paintingNames = SQLSelect

.scalarQuery(String.class, "SELECT PAINTING_TITLE FROM PAINTING WHERE ESTIMATED_PRICE > #bind($price)")

.params("price", 100000)

.select(context);And here is example of how to use SQLExec:

int inserted = SQLExec

.query("INSERT INTO ARTIST (ARTIST_ID, ARTIST_NAME) VALUES (#bind($id), #bind($name))")

.paramsArray(55, "Picasso")

.update(context);MappedSelect and MappedExec

MappedSelect and MappedExec is a queries that are just a reference to another queries stored in the DataMap. The actual stored query can be SelectQuery, SQLTemplate, EJBQLQuery, etc. Difference between MappedSelect and MappedExec is (as reflected in their names) whether underlying query intended to select data or just to perform some generic SQL code.

These queries are "fluent" versions of deprecated NamedQuery class. |

Here is example of how to use MappedSelect:

List<Artist> results = MappedSelect.query("artistsByName", Artist.class)

.param("name", "Picasso")

.select(context);And here is example of MappedExec:

QueryResult result = MappedExec.query("updateQuery")

.param("var", "value")

.execute(context);

System.out.println("Rows updated: " + result.firstUpdateCount());ProcedureCall

Stored procedures are mapped as separate objects in CayenneModeler. ProcedureCall provides a way to execute them with a certain set of parameters. This query is a "fluent" version of older ProcedureQuery. Just like with SQLTemplate, the outcome of a procedure can be anything - a single result set, multiple result sets, some data modification (returned as an update count), or a combination of these. So use root class to get a single result set, and use only procedure name for anything else:

List<Artist> result = ProcedureCall.query("my_procedure", Artist.class)

.param("p1", "abc")

.param("p2", 3000)

.call(context)

.firstList();// here we do not bother with root class.

// Procedure name gives us needed routing information

ProcedureResult result = ProcedureCall.query("my_procedure")

.param("p1", "abc")

.param("p2", 3000)

.call();A stored procedure can return data back to the application as result sets or via OUT parameters. To simplify the processing of the query output, QueryResponse treats OUT parameters as if it was a separate result set. For stored procedures declaref any OUT or INOUT parameters, ProcedureResult have convenient utility method to get them:

ProcedureResult result = ProcedureCall.query("my_procedure")

.call(context);

// read OUT parameters

Object out = result.getOutParam("out_param");There maybe a situation when a stored procedure handles its own transactions, but an application is configured to use Cayenne-managed transactions. This is obviously conflicting and undesirable behavior. In this case ProcedureQueries should be executed explicitly wrapped in an "external" Transaction. This is one of the few cases when a user should worry about transactions at all. See Transactions section for more details.

Custom Queries

If a user needs some extra functionality not addressed by the existing set of Cayenne queries, he can write his own. The only requirement is to implement org.apache.cayenne.query.Query interface. The easiest way to go about it is to subclass some of the base queries in Cayenne.

E.g. to do something directly in the JDBC layer, you might subclass AbstractQuery:

public class MyQuery extends AbstractQuery {

@Override

public SQLAction createSQLAction(SQLActionVisitor visitor) {

return new SQLAction() {

@Override

public void performAction(Connection connection, OperationObserver observer) throws SQLException, Exception {

// 1. do some JDBC work using provided connection...

// 2. push results back to Cayenne via OperationObserver

}

};

}

}To delegate the actual query execution to a standard Cayenne query, you may subclass IndirectQuery:

public class MyDelegatingQuery extends IndirectQuery {

@Override

protected Query createReplacementQuery(EntityResolver resolver) {

SQLTemplate delegate = new SQLTemplate(SomeClass.class, generateRawSQL());

delegate.setFetchingDataRows(true);

return delegate;

}

protected String generateRawSQL() {

// build some SQL string

}